Thinking Inside the Box

Vivid House is an immersive audiovisual showcase that demands to be experienced.

Text:/ Christopher Holder

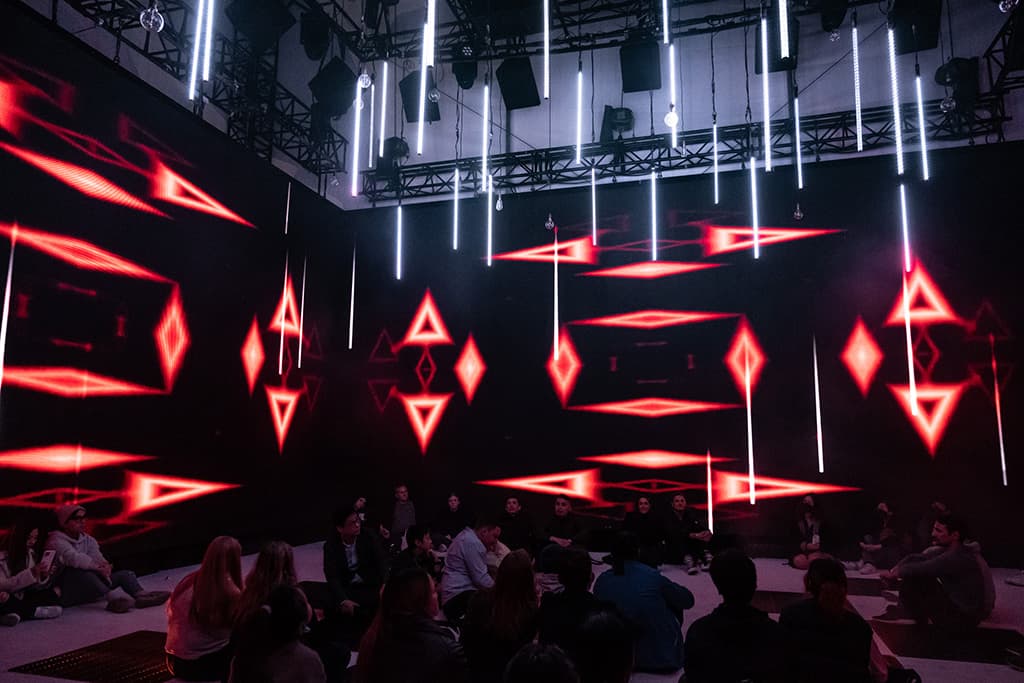

Vivid House is an immersive box. An AV cube, combining LED, 50-odd loudspeakers, LED tubes, lighting and haze. When people bandy about the word ‘immersive’ Vivid House is like the Mick Dundee riposte: ‘no, this is immersive.’

Vivid House is the brainchild of Des O’Neill and his aFX-Global team, which are specialists in immersive AV experiences. The idea is the box is reproducible and provides an immersive blank canvas for creators.

Vivid commissioned aFX-Global to provide just such a box, calling it Vivid House.

DESIGN TEAM

To get the concept off the ground Des O’Neill created a team (that included himself, lighting designer, Peter Rubie and creative sound designer/composer, Peret von Sturmer) that could not only demonstrate their ability to design and build the AV box but to also design immersive content — selling the sizzle, so to speak.

Together they created a piece called Progressum, an impressive work that combines all aspects of the immersive offering, including immersive audio, lighting design and visuals.

“Our original pitch was to use a porous scrim and projection rather than LED,” explain Des O’Neill. “That way we could choose to project light through the screens or reflect light off the screen back into the room and would allow us to place loudspeakers at head height behind the scrims for better localised audio.”

It turns out that Vivid had some existing immersive content up its sleeve and wanted to commission a further piece, so Des needed to work with LED to get the project over the line.

“It meant that we ended up with a more unique spatial audio system, that probably delivers less accuracy in terms of pinpointing of sound, but still creates like an immersive environment with lots of motion.”

The audio exists in four layers or rings: under floor, under screen, above screen and overhead plus a subwoofer component. Between the layers, vertical panning is happening, with the under screen and under floor loudspeakers helping to pull the image down when required. Some 50 Meyer Sound loudspeakers were employed all up, supplied by Coda Audio.

GETTING IT RIGHT IN THE MIX

From an audio perspective, the work starts for Des O’Neill and his team in the studio. Des has been representing the IOSONO immersive audio system in Australia since 2015. Now owned by Barco, IOSONO is especially powerful for its ability to translate an object-based mix into almost any other similar immersive audio environment — from a highly granular wavefield synthesis render (the gold standard of immersive audio) to a amplitude/level-mixed surround-sound situation, IOSONO places audio without being overly flummoxed by the number and positioning of the loudspeakers you have at your disposal.

“We encouraged the artists designing for Vivid House to not concern themselves with where the loudspeakers are situated in the studio but to simply nominate where they want sound to emanate from and allow the IOSONO rendering engine to do the rest.” [See the image and render of the aFX studio below.]

RENDER TIME

Each piece was mixed in Nuendo using a VST plugin called Spatial Audio Workstation, that spits out an IMF file upon completion. This multi-channel spatial audio file is used by the IOSONO renderer to decode and direct audio traffic in the installation depending on where the loudspeakers are placed. “It was all mixed to about 98% before we got on site. We had the artists in the studio and we reassured them that the studio mix would translate well in Vivid House thanks to what we knew about the IOSONO renderer, which takes and stores the IMF metadata file — you’re not printing stems, as such — such that every sound object knows its place in the mix and its relationship to all the other objects, which then allows it to be scaled.

I AM GRUNTY

The IOSONO rendering engine comes in a 64- or 128-channel frame size, using MADI cards to route out to the amps and loudspeakers (via DirectOut Technologies Andiamo converters). Des O’Neill elaborates: “We measured out on a grid the Cartesian coordinates for all the loudspeakers in Vivid House. We made what’s called a loudspeaker file, and then we put that loudspeaker file into the IOSONO unit itself. In that way it’ll do all the spatial time aligning. The system also has enough DSP to take care of system tuning, including phase response, frequency response and an SPL measurement of each speaker in the system. IOSONO has unlimited IFR and FFR filters on the outputs, so it’s good for EQ matching different types of speakers. In our case, all our loudspeakers were from Meyer Sound but various models and vintages. IOSONO aligned and tuned the system and ensured the high frequency content, especially, sounded coherent.”

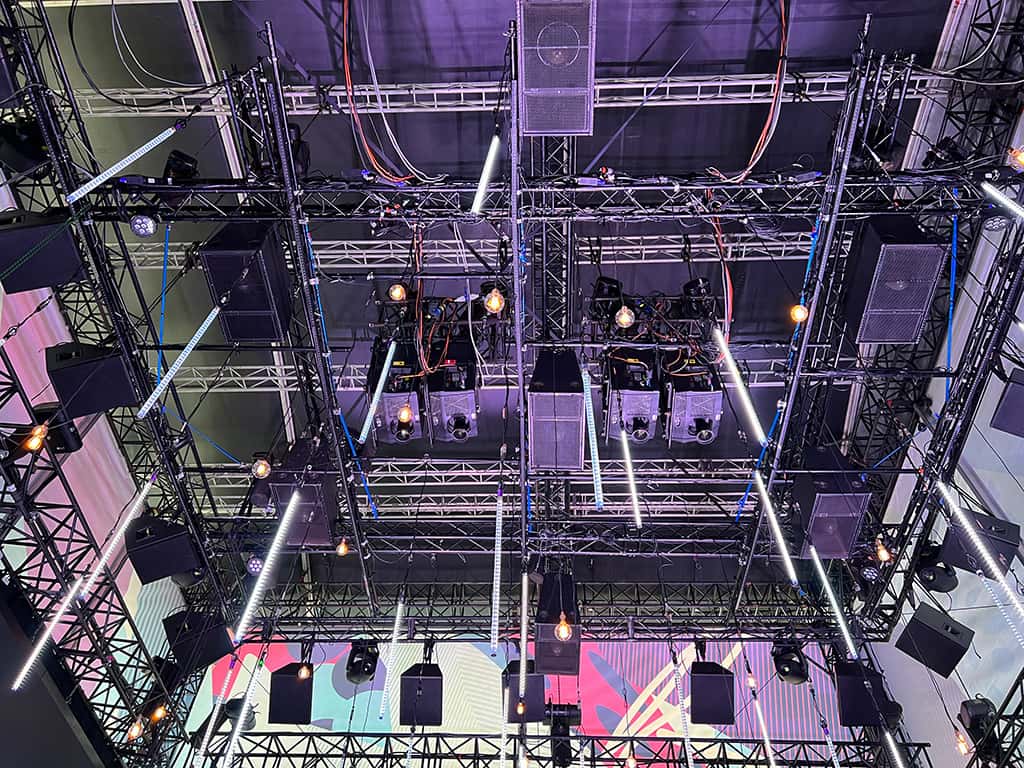

MORE THAN LED

In addition to the 4x 9.6m-wide by 4.8m-high LED walls (supplied by TDC), the space is filled with 40x Dreampix Tubes, each one housing 40 double-sided pixels suspended at staggered heights above the heads of the audience. The collective 3200 pixels fill the vertical space in the air and extend the same content presented on the LED screens into the middle of the room, which adds a third visual dimension and results in the audience being fully immersed in the visuals presented on the LED screens. In the top layer of the space are 30x Dreampix Strip which provide a further 1200 pixels aimed downwards to give a fifth side to the video content. 40x Filament Globes randomly hang in amongst the tubes to provide a warm earthy glow, and a tungsten contrast to the digital LED tubes.

8x Axcor 400 Moving Profiles provide texture and animations that fill the space in between the suspended LED tubes. Their shutters are also used extensively in the digital section of the piece with thin blades cutting through the haze to simulate passages of time passing over the audience.

On the floor layer below the LED screens sit a dozen Fusion Bar QXV LED battens which provide punches of colour upwards into the middle of the room. A further dozen battens sit below the floor to provide ripples of colour below the audiences feet made possible by a number of metal grates in the structures floor. Also scattered through these grates are 8x Chauvet R1 Wash moving fixtures which provide a multitude of effects ranging from wide blasts of white light alongside sounds of steam to moving beams providing slow moving waves of light. 2x Look Solutions Unique 2 hazers sit below the floor layer and are strategically placed to distribute the haze in such a way that it rises out of the grates and up into the air, providing another visual dimension as rays of light from the overhead projectors and moving lights dance in time with the sound composition.

PROGRAMMING LIGHTING & VISUALS

The lighting was programmed on a Chroma Q Vista S1 console. Pixel control was done with an Artnet merge from both Vista and a separate Madrix Lighting controller which drives all of the more detailed pixel elements in the show. Madrix is first and foremost an LED pixelmapping tool, but in this instance it was also used to generate all of the content used in the video design, meaning the same visuals used in the LED tubes could be slightly tweaked to work with the higher resolution LED screens running at 1280×10240. “What was great about the Madrix system was I was able to manipulate and capture the content in real time, without the need to wait for rendering time,” commented Lighting Design Peter Rubie. “This really helped me as a Lighting Designer who is used to working in real time with colour textures and effects.”

Content was then stitched together in Davinci Resolve and played back on TDC’s Disguise d3 Media Server.

All show control systems are triggered off SMPTE timecode coming from the media server, allowing for the precise sync of all lighting and video elements to the audio composition. Much of the programming detail in the lighting and video was spent pinpointing specific 3D sounds elements that move around the audience in an immersive XYZ space. According to Peter Rubie: “Being able to complement the video design with lighting around and below the audio and to be able to synchronise the position and direction of the lighting in relation to the sound objects gave us a truly immersive experience and a point of difference from other the other video installations using the space.”

IMMERSIVE FUTURE

‘Immersive Experiences’ are now a big deal. Being an immersive evangelist for years, Des O’Neill has some thoughts on the current trend: “The word ‘immersive’ gets used for everything now just because it’s big or because it takes over your peripheral vision. But I don’t think that’s sufficient. For example, VR is great, but the problem is it’s isolating. In the case of Vivid House, everyone’s having the same spatial experience and they’re actually sharing it. You can see how families and friends respond and it elevates the experience. Vivid House provides a truly multi-faceted immersive offering. You’ve got textures and shapes forming in mid air via lighting beams coming through that haze. The sound coming from all around you. Lighting comes into the space, and obviously the video is there as well.

“To see immersive experiences like Vivid House develop and expand we need to get the tools into the hands of the creatives. You can do your best to explain something like Vivid House to an experienced audio or visual designer but until they experience it and develop content for it, it’s all a bit theoretical. I’d like to see what we’ve done assist to push the boundaries of creativity.”

CONTACTS

aFX: www.afx-global.com

Intense Lighting Hire: intenselightinghire.com

TDC: tdc.com.au

Coda Audio: coda-audio.com.au

Vivid Sydney: vividsydney.com/event/light/vivid-house

AUDIO SYSTEM

Vivid House uses an IOSONO processor (an object-based spatial audio renderer that utilises a Wavefield Synthesis algorithm) to render audio objects in real-time to the attached Meyer Sound loudspeaker array — consisting of 50 loudspeakers distributed across four layers plus a subwoofer layer. Each loudspeaker and amplifier channel gets a direct feed from the IOSONO Processor. Each loudspeaker in the system is calibrated to deliver a sound pressure level of 85dB SPL using calibration pink noise @ -20dB RMS at a central listening position in the space ensuring plenty of headroom in the system. The IOSONO processor carries out the function of time and phase alignment through the creation of a Loudspeaker File. This is a file that informs the system of where all the attached loudspeakers are located in a 3D space. The IOSONOinside processor comes with system tuning tools that allow for multiple measurement mics, phase check, SPL check, frequency response and the creation of target EQ curves for each of the speaker outputs.

RESPONSES